Markets are currently fixated on energy access as the near-term bottleneck for data centers... but strong underlying fundamentals are still the key to long-term asset performance.

Water access is one of those fundamental factors, and this MIT Technology Review article on data centers and water constraints in Nevada is well worth a read.

Elements that caught my eye:

- Direct cooling demand could reach 0.9–5.7 billion gal / yr and electricity generation could indirectly add ~15 billion, but actual figures general remain proprietary

- Tribal and local experts are working to highlight the risks of additional pressure on local systems

- Closed‑loop, water‑free air and immersion cooling could lead to meaningful demand reductionsHow does the rest of the world stack up?

Our Othersphere platform includes over 10,000 existing data centers, with World Resources Institute Aqueduct 4.0 basin‑level water stress (0‑5 scale) included as one search attribute.

Today nearly 15 % of data centers operate in the most water stressed locations (4.75 to 5), and the general distribution of data centers implies that water hasn't really mattered to siting... at least to date.

But that may be changing as:

1️⃣ Leading operators raise the bar

2️⃣ Public scrutiny climbs

3️⃣ Cooling tech continues to improve

Reach out if you want to learn more, or see how your site or company portfolio fits in into all of this.

Source:

Original data source:

Spatial coverage:

Visibility:

Methodology:

Download:

License:

The data provided herein is made available on an "as-is" basis, without warranty of any kind, either expressed or implied. The provider does not guarantee the accuracy, completeness, or usefulness of the information. By downloading this data, the user acknowledges that the provider shall not be held liable for any errors, inaccuracies, or omissions in the data, nor for any damages, losses, or consequences arising from the use, misuse, or reliance on this data. The user assumes full responsibility for the use of the information provided and agrees to use it with caution and at their own risk.

When assessing data center sites, there can be a balancing act between remote locations and those closer to population centers.

Remote locations can offer clear advantages, including more building space or possibly lower-cost energy. But for AI inference, colocation, and other traditional compute needs, being closer to population centers of end-users is critical.

For data centers, fiber infrastructure plays a central role in that equation. Though factors like access to energy, community sentiment, and the permitting environment are a critical part of site assessments,this infrastructure is only as valuable as the user base it can reach.

To support this decision-making, we recently added a new data layer to inform Othersphere’s data center diligence engine, and we’re excited to share more about this work (to say nothing of the pretty maps).

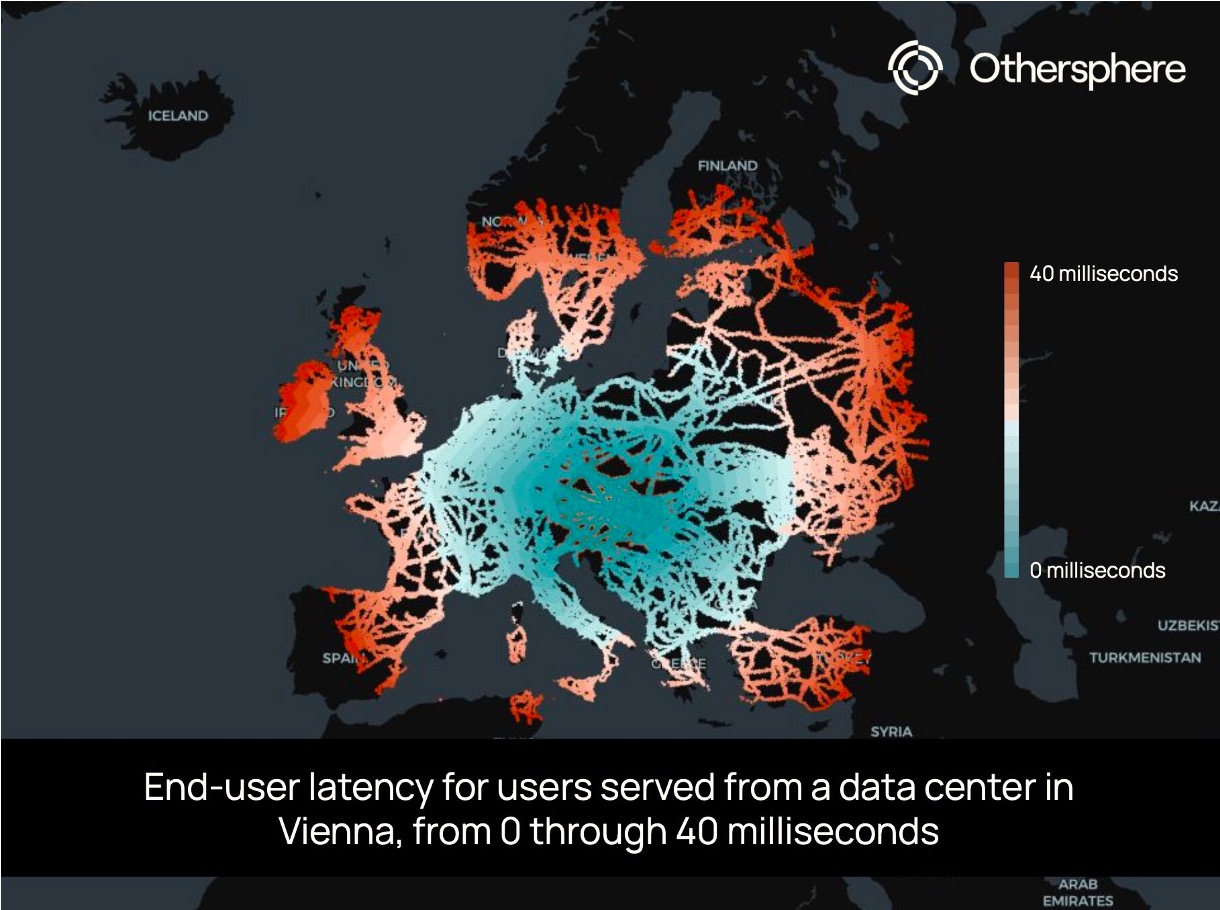

This new data layer provides an estimate of the population that can be reached from any location within certain latency thresholds. It adds an important dimension to site selection by helping clarify the tradeoffs between locations that may look attractive for different reasons.

This is made possible through our infrastructure analysis methodology, which indexes critical data to millions of locations and models the relationships within and between them. For this population estimate, the calculation begins by overlaying the world’s terrestrial and submarine fiber cable infrastructure on high-resolution population data. We then construct a weighted graph covering the entire globe, where adjacent tiles are connected by edges to calculate round-trip latency. As the signal moves from any user-selected location across on-fiber or off-fiber tiles, varying time penalties are applied. At a global scale, this requires simplifying assumptions on key questions such as available capacity, which we are able to investigate more deeply in each site-specific assessment.

This bottom-up estimate reveals stark geographic disparities. A well-connected tile in Europe (a randomly selected address in Vienna, in this case) can reach hundreds of millions of people within 40 ms, while a tile not far away in a telecom gap may only provide low-latency services to its immediate neighbors.

This data layer powers Othersphere’s understanding of where digital infrastructure creates (or fails to create) meaningful connectivity, and it is now threaded into all of our site assessments and scoring.

Reach out if you would like to learn more, or comment below if there are locations you’d like to see us post about.

When assessing data center sites, there can be a balancing act between remote locations and those closer to population centers.

Click here to access the Othersphere Data Center Atlas

What does the market view as a good data center location? This is something we can clearly observe based on how data centers are deployed today.

But how will the definition of a successful project evolve over time? And how might this differ across different classes of data centers?

Planning for the long-term, it is important that markets and policymakers are intentional about their expectations and requirements for these assets, as the role of data centers in economic growth, energy demand, climate outcomes, and geopolitical stability will all undoubtedly increase.

Then in the near-term, the risk of data center investment bubbles and market swings appear very high. As such, the ability to target data centers with strong fundamentals that will succeed in both good times and bad is essential.

History smiles on those who build commoditized industrial infrastructure in locations with the best fundamentals, so who will be the 'Saudi Arabia of compute'? The race to acquire power for these information refineries is the dominant narrative today. But when the smoke clears it will be key long-term fundamentals that matter to operators, policymakers, investors, communities, and beyond.

With this framing in mind, this Data Center Atlas is our contribution to inform a more data-driven view of the shape of the global data center fleet today, and how this may evolve over time.

At launch this Atlas includes a sample of the metrics we use to evaluate existing and potential data center locations. Here we highlight trends at the thousands of sites around the world that host data centers today, drawn from the over 180 million locations that Othersphere models worldwide. We are also experimenting with AI-generated/human-reviewed context for each metric; with AI model outputs shaped by the data provided from our backend.

This living document is also a pilot for future report-centric products on data centers and other industrial sectors, helping us understand needs around:

Reach out to learn more or provide feedback, and in the meantime, thanks for reading!

What does the market view as a good data center location? This is something we can clearly observe based on how data centers are deployed today.

Please reach out if you would like to learn more about Othersphere, our products, and opportunities to partner in accelerating deployment of high performance, sustainable infrastructure.